Continuing my last article, I promise to cover in detail the On-Cloud infrastructure but recently there was an incident happening last week. So let me step back and discuss this error and how to analyze and solve it.

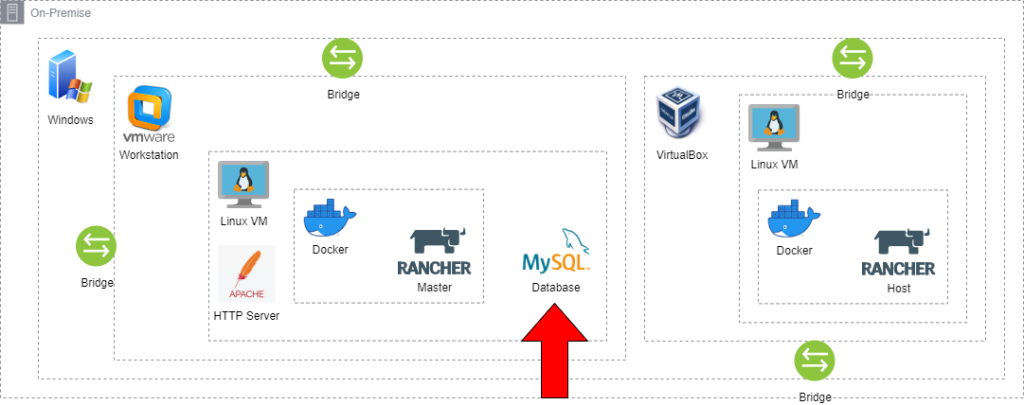

Last week, Wednesday 24th the Linux VM on VMware suddenly crashed due to SAS (RAID 0) hard disk failure. To avoid loss of the virtual machine I’ve decided to move it to another hard disk while looking for the replacement online. It took hours to copy the virtual machine but luckily the files were intact and able to boot normally. Unfortunately, somehow the database MySQL that stores the Rancher Master failed to start. So, I decided to take a break and revisit later in the evening after office hours. 😊

First, to know what has happened, I need to investigate the database log file inside the MySQL application folder, which is in my case inside the directory /var/mysql. This might be different from other Linux distributions. After that, I try to find the <hostname>.err file to see the text log inside with the following command.

$ cat <hostname>.err

Once displayed, I need to search the log that contains [ERROR] to identify the issue. Below is the sample text log.

2024-01-24 7:23:32 0 [ERROR] InnoDB: Missing MLOG_CHECKPOINT at 255886398 between the checkpoint 255886398 and the end 255886336.

2024-01-24 7:23:32 0 [ERROR] InnoDB: Plugin initialization aborted with error Generic error

2024-01-24 7:23:32 0 [Note] InnoDB: Starting shutdown…

2024-01-24 7:23:32 0 [ERROR] Plugin ‘InnoDB’ init function returned error.

2024-01-24 7:23:32 0 [ERROR] Plugin ‘InnoDB’ registration as a STORAGE ENGINE failed.

2024-01-24 7:23:32 0 [Note] Plugin ‘FEEDBACK’ is disabled.

2024-01-24 7:23:32 0 [ERROR] Unknown/unsupported storage engine: InnoDB

2024-01-24 7:23:32 0 [ERROR] Aborting

Inside the log file, I noticed the error message ‘InnoDB Missing MLOG_CHECKPOINT at [num] between the checkpoint [num] and the end [num],’ which might be the cause for MySQL failing to start. I prefer to do my research in the evening before bedtime, as it is the best time with fewer distractions from my kids and cats 😸. After spending around an hour digging through the internet, I found the solution.

Various factors can lead to a malfunction in a SQL database, but the error I’ve found specifically pertains to MySQL and MariaDB database connections. In the context of these relational databases, InnoDB serves as a storage engine. A critical component within InnoDB is the redo log, a disk-based data structure employed during crash recovery to rectify data modified by incomplete transactions. Essentially, the redo log captures encoded instructions for altering table data.

To delve a bit deeper, the redo log manifests as files labeled ib_logfileX, with the placeholder ‘X’ denoting a numerical value. These files are instrumental during crash recovery scenarios. Periodically, InnoDB initiates a checkpoint process, which plays a pivotal role in determining the log files that require flushing. This checkpoint mechanism aids in maintaining data integrity and consistency within the database.

From the above explanation, the solution to make MySQL start normally again is to delete the InnoDB log files with the following commands in Linux:

$ sudo rm ib_logfile*

Once confirmed to be deleted, I then try to start the database again, and tadaaa! MySQL successfully started 🎉

Stay tuned for my next article where I’ll dive into the details of On-Cloud infrastructure.